The Morelia leg finished over the weekend, and while the players made their away across the Atlantic, we could relax a bit after the frenetic action in Morelia. When I wished for a more exciting tournament than in recent years, I hardly expected this. More than half the games have been decisive so far (15/28), and most of the draws have been well-fought out as well. While some of the play has been sub-standard (a couple of blunders even I could have avoided), it has resulted in each participant recording atleast 3 decisive games and each one winning and losing atleast one. Vishy is in the lead at the moment, by a half point over Topalov and Shirov. It is great to regularly see Vishy play sharp lines like the Najdorf and the Semi-Slav again.

Here’s hoping to more of the same for the rest of the tournament.

Archive for February, 2008

Linares resumes tomorrow

February 27, 2008

Rating a ‘B’ in a Project Delivery Sanity Test

February 23, 2008Most people in our industry recognize that ThoughtWorks is one of the leading software consulting companies today. A lot of this can easily be attributed to the number of superstars present in the company – the number of books, papers, talks and podcasts that emanate from there are testament to the same. When one of them writes (or speaks), we stop to read (or listen). So when one of them puts up a checklist of items that will likely decide the success or failure of a project, I decided to take the test to see how our project ranks (I won’t bother trying to decide if the checklist itself is accurate or not).

1. A Delivery Focus (B-)

There are two parts to this question. I definitely think our feature set gets built with a usability focus though I don’t think we satisfy the tjme-constraints he sets out for getting software into production. We have definitely gotten much better at this over the past year but still a-ways off from ideal.

2. Clear Priorities (A-)

I think our priorities (though always subject to change) are pretty clearly understood within the team. When they become muddled, we take time out to ensure everybody’s on the same page.

3. Stakeholder Involvement (D)

I have always thought this has been our weakest attribute to date. The funny thing is that focus has always been on improving this feature of our software development process and a different approach is taken each cycle, with nothing major to show for it. The biggest issue is the lack of a true product-owner. Again, hopefully that’s changing for the good as well.

4. Business Analysis (C+)

I might be way off on this one – the problem is that I don’t get to see the business analysis. And truth be told, I would probably not understand it too well either. This might easily be an A-. I hope it is.

5. Team Size and Structure (A)

This is one of our strong suits, definitely.

6. Planning and Tracking (A-)

I would have thought we would get A on this one, but with Amit’s definition of this criterion and his emphasis on getting software in the hands of the customer as early as possible, I think we lack a bit in that area.

7. Technical Architecture (B)

Atleast with the code I work in – the architecture bit itself is quite good – and we refactor as and when required (and not just for the sake of it). Yet, the build times are absolutely horrendous and hinder developer productivity. We are taking efforts now to cut down on the build times but until we do, its only a solid B.

8. Testing, QA practices (B-)

An A+ for our testing practices, but a non-existent QA department.

9. Understanding software development (A+)

Definitely the strongest attribute. Everybody who is in a managerial position definitely understands software development and are on the same page as the devs.

I know some of my workmates read this blog, so I’d definitely be interested in knowing whether you agree with these metrics (more or less) and whether you would grade any differently. What do you think?

Third time lucky

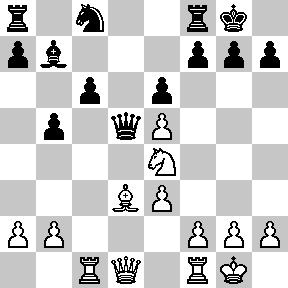

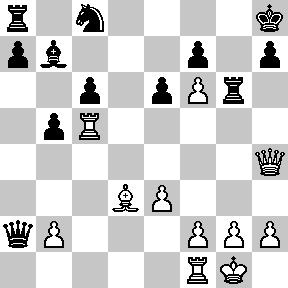

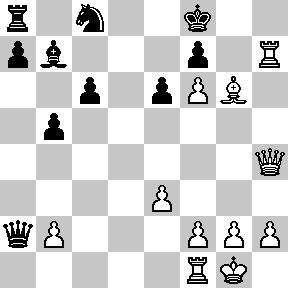

February 22, 2008I finally got to play a game with white and it resulted in a win for me, albeit with a bit of luck. Here’s the link to replay the game:

1.e4 Nf6 2.e5 Nd5 3.d4 d6 4.c4 Nb6

I don’t think I have played against the Alekhine defence in OTB play, though I have played it a number of times on-line. I believe the two main moves in this position are f4 and Nf3. I have played both before but am more comfortable after the exchange on d6. White has more space and a better control of the center.

5.exd6 exd6

Capture with the c-pawn is more common, but there is nothing wrong with this move either.

6.Nc3 Bf5

Now this was a bit more unusual. Normal moves are Nc6 or Be7, as it is still not clear where the light squared bishop should land.

7.Nf3 Nc6 8.a3

Preventing 8…Nb4 targeting the weak c2 square. Bd3 was probably better, exchanging off the light-squared bishop.

8…a5 9.Be2 [Bd3] g6 10.O-O

The computer suggests 10.d5 Ne7 11.Qd4 Rg8 12.0-0 Bg7 13.Qd1 where white is slightly better, having prevented black from castling kingside. And he is well positioned to take up the initiative on the queenside.

If 10.d5 Ne5 11.Nxe5 dxe5 12.c5 Nd7 13.Be3 white is again slightly better because of his space advantage and active pieces.

10…Bg7 11.Bf4 a4 12.Qd2 [Bd3] O-O 13.Nb5

The point of this move is to target the weak c7 pawn. He cannot move it because then d6 is weak. For the same reason, d6 cannot be moved because of the c7 pawn. My aim is to try and get c5 in at some point, to take advantage of his weakness. But black finds an active plan to thwart my idea.

13…Na5 14.Qc3 Nb3 15.Rad1

I wonder if 15.Rae1 was better, putting it on the open file. I was saving e1 for my other rook but it was probably more essential to take control of the e-file as quickly as possible.

15…Nd7 16.Nd2 Ndc5 17.Nxb3 Nxb3 18.Bf3 Qc8 19.Rfe1 Bg4 20.Re7 Qf5

And here, I fell into the same trap as I did two games back. I spotted a tactical idea, but I didn’t spend enough time going over it carefully. I could feel the blood rushing to my head, and I didn’t calm myself enough to spot my hanging rook on d1.

21.Nxc7??

The first big mistake of the game. After this, I am just lost. g3 was a much better option.

21…Qxf4 22.Re4 Qxe4 23.Bxe4 Bxd1 24.Nxa8 Rxa8

The tactical spurt is over. Black has three pieces and a rook against my queen and bishop.

25.Qd3 Bg4 26.h3 Bd7 27.Bxb7 Re8 28.Bd5 Nxd4 29.h4 Bf5 30.Qd1 Nc2 31.Kh2 Re1 32.Qd2 Re8??

Black’s turn to return the favor. I am now seemingly back from the dead. I can take advantage of the fact that his light-squared bishop doesn’t have any other squares on the b1-h7 diagonal from which it can protect the knight. 32…Be5+ 26.g3 was much better, pinning the g-pawn and not allowing me to play g4. But, by this time, black was in severe time trouble, having about a minute to finish move 35. Normally, that should be enough for someone, but my opponent was labouring and muttering, trying to find the perfect move.

33.g4! Bxg4 34.Qxc2 Re2 35.Qc1? 1-0

The penultimate mistake. Qxa4 and I hold the advantage. This move allows black to play Bxb2 and regain the advantage.

Instead, he tried to play Rxf2 and his flag fell playing the move, so I won on time. Nevertheless, with Rxf2, the advantage is definitely with me after Kg3, and I will win another piece.

Communications of the ACM turns 50

February 16, 2008I opened up the 50th Anniversary issue (Jan 2008) this morning and was astounded. In honour of the occasion, they have dug through the past to provide historical tidbits and made an effort to look ahead and predict the future.

Throughout my college education, the one field of Computer Science that captured my imagination the most was algorithms. The three people’s work which influenced me most, in some order, were Fourier, Turing and Dijkstra. So, you can imagine my excitement when the first ‘article’ in the magazine was a reprinting of a letter Dijkstra sent to the editor of the magazine in March 1968, criticizing the use of go-to statements in programming languages. Written in very simple, easy-to-read English (I always remembered him for the simplicity with which he presented his work), one particular quote stands out for me, emphasizing the mathematician in him:

“Let us now consider repetition clauses (like, while B repeat A or repeat A until B). Logically speaking, such clauses are now superfluous, because we can express repetition with the aid of recursive procedures.”

Functional programming, anyone?

Other things I found interesting:

- Even the little things we barely think about while working today have a history: “Niklaus Wirth won the ACM A.M. Turing Award in 1984. In his address published in the February 1985 issue…He identified the need to separate requirements and capabilities into the essential and the ‘nice to have.’”

- The first meeting of the ACM was way back in 1947, when they named it Eastern Association for Computing Machinery, and the Eastern was dropped later. But of particular significance to me is that the original 78 members actually met at my Alma Mater, Columbia University.

- A nice quote by Jon Bentley: “The intellectual pleasures and financial rewards of solving one programming problem, it turns out, are just the prelude to solving many more.” And talking about his “Programming Pearls” column from the 1980’s, “For the most part, they were fun stories of how clever colleagues had phrased and solved programming problems, not infrequently discovering that ‘we have met the enemy, and he is us.’” Touche.

- Jeannette M. Wing, in the piece, Five deep questions in computing, poses what she considers the most important questions for us to answer:

- P = NP?

- What is computable?

- What is intelligence?

- What is information?

- (How) can we build complex systems simply?

- Rodney Brooks, addressing the next 50 years, “Expect new ways to understand computation, computational abstractions for our computing machinery, and connections between people and their information sources, as well as each other.”

- Jon Crowcroft proposes a new Internet architecture that says goodbye to the current packet-switching model in favour of a paradigm borrowed from physics that uses “the notion of wave-particle duality to view a network with swarms of coded content as the dual of packets…The data is in some sense a shifting interference pattern that emerges from the mixing and merging of all sources.” Hmm…

Will this year’s Linares be any different?

February 16, 2008Ok, ok, Morelia-Linares, as it has been for the past couple of years. Three decisive games (with 3 Sicilians and one win each for white and black) in the first round certainly provokes hope that it will be. Linares, even more than the other super-tournaments, is a draw-haven. With a double round-robin format among 8 of the elite players resulting in only 4 games a day (I actually prefer their old format where they had 7 players in a double round-robin, with one player sitting out each day), it is very rare to get more than 1 decisive game a day.* In the past few editions, the one day of 3 decisive games was negated by the couple of days with all draws.** Let’s hope this one’s different.

It was interesting to see Anand bring out the Najdorf against Shirov. He seems to mostly prefer the Petroff and the Ruy nowadays, but seems to bring out the Najdorf against specific players – Carlsen and Judit Polgar come to mind. I think this is because all four of them are similar kinds of players (except maybe Polgar who isn’t as positionally savvy as the other three, I think), and he feels confident he can out-think them in any kind of position. I am especially keen on observing his upcoming two games with Carlsen – if I am not mistaken, he has beaten Carlsen in their last 3 meetings – twice in the same tournament last year and then last month at Wijk Aan Zee. Carlsen will of course be riding high after his tournament victory there.

Obviously, I am rooting for Anand to come good – this being his last tournament with classical time-controls before his match with Kramnik in October (I believe he is playing in the Monaco Amber Rapid-Blindfold tournament and probably a couple of other rapid tournaments before then), it is his chance to re-emphasize his World Champion status. Obviously, Kramnik is an extremely strong match player and has played many more matches of late. He will of course have to get past Kramnik’s Petroff (or worse, Berlin), and though he came close to it at Wijk last month, I think he will have to switch to 1.d4 to make inroads. With black, he’s recently moved from the QID to the Semi-Slav, which I think brings him a lot more winning opportunities. Let’s wait and see.

* Having said that, I prefer Linares to Dortmund. Though Dortmund might have more decisive games because they usually have one or two players not at the very top, the constant shuffling of formats (double round-robin, round-robin within groups followed by knockouts, and the current format with single round-robin) has left me disinterested. Moreover, the current format results in the tournament finishing before I’ve really had a chance to get into it as a fan.

* Linares was, of course, a very exciting event back in the day when first Karpov, then Kasparov, were dominating chess. Karpov’s famous +9, 11/13 performance in 1994 is the stuff of legend, while Kasparov’s domination there, year after year, is also simply mind-boggling.

My claim to shame

February 13, 2008As mentioned previously, here’s the link to the game.

I don’t think there’s any need to annotate this miniature though I’ve had a look and satisfied myself that I did in fact play horribly.

Stop mocking me already

February 12, 2008We all use mock objects for testing. Since the advent of IOC and DI, it is an extremely common pattern to mock or stub out dependencies for a class, inject them in, and then test the class without worrying about access to a database or an http server from a unit test.

This is all very good in theory but how well does it work in practice? I regularly use both mocks and stubs and it is important to realize which one is needed for a particular unit test or a test class. It is an art, but with experience, you tend to make the right choice more often than not.

So, where is this rant coming from? I recently moved over to a codebase which makes extensive use of both mocks and stubs, but especially mocks. When using mocks, you need to specify expected behaviour of each mock. When abused, your unit tests tend to define the implementation of your methods. I HATE THAT. Unit tests are meant to test your implementation of a tiny piece of functionality, not dictate the implementation. When you add two lines of code to your method, you shouldn’t have to change a bunch of unit tests which now fail because you called an unexpected method on your mock object. With a stub, to a large extent, this problem is muted, but can still surface from time to time.

For the past year, I worked on building a SIP server. As a team, we decided we should test real SIP in our unit tests. Acceptance tests were at a much higher level of abstraction and weren’t ideal for testing low level SIP messages. If we mocked out the SIP layer, we would a) spend 50% of our time mocking out complex JAIN-SIP objects to test our code, b) find our acceptance tests were failing because of incorrect behaviour at SIP level and spend another 20-30% of our time redefining our mocks to keep our tests passing and c) more than likely, stop maintaining our tests because of the nightmare caused by the first two points.

Instead, we chose to use SipUnit to represent SIP endpoints that can be used in testing our SIP server. We were testing real functionality from our unit tests and though it increased build times significantly, the benefits gained by saving developer cycles and the rise in confidence that our unit tests were testing exactly what they should be testing were more than adequate compensation for the build times.

In the same way, using an in-memory Hypersonic database to test all dao code is extremely preferable to using mocks to test database access. I would much rather peek directly into a table in a database from my test (using a select) than assert with a mock or stub the exact SQL string used in an insert statement. I really don’t care if there’s more whitespace in the SQL than what I expect in my mock, and more often than not, I will copy my expected SQL from the test into the code (or vice-versa, depending on if you write test-first), thereby having the tests pass, while possibly still having errors in your code. You might of course want to stub or mock out your dao code when testing other parts of your application, or if you deem it necessary, continue using the in-memory database.

While I am at it, another point: I really enjoyed the book xUnit Test Patterns (part of the Martin Fowler series), from which I picked up several useful tips and tricks for unit testing. In it, the author goes to great pains to state that when testing concurrent code, it is essential that unit tests don’t introduce concurrency, but instead test functionality serially and deterministically, preferably using mocks and stubs. I disagree. While it is important and necessary to have the kind of tests he mentions, it is also important to unit test the concurrent code. What better place is there to test race conditions in concurrent code than at the lowest possible layer of abstraction?

This is not all to say that mocks and stubs don’t have their uses. As with anything else, don’t use them blindly. Use them with caution; use them when they are the right tools for the job.

A new personal record

February 8, 2008I reached a new low for OTB performances last night, when I resigned on my 14th move, one move away from mate. For some reason, I spent lots of time figuring out variations and then, happy with my choice, I would make my move. While waiting for my opponent to mull things over, I would spot the one critical move which I hadn’t considered, and the one move he would end up playing. It happened two or three different times, enough to spell doom.

I am still unsure if I will try and rid this game from my memory banks or whether I will spend time analyzing it.

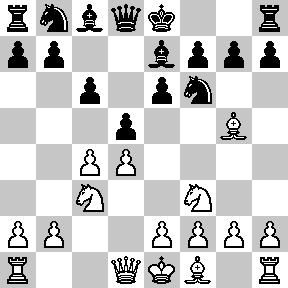

My first serious game in two and a half years

February 6, 2008You can replay the game in its entirety or walk through the game along with the analysis here.

1.e4 c5 2.Nc3 e6 3.f4 d5

In the closed Sicilian, I have never come out satisfactorily out of the opening. Not wanting to open up the position before I’ve castled, I would normally play d6, Nf6, Be7, resulting in a passive position and my attempts at queenside play were almost always too slow. So I tried to play actively in the center hoping to avoid similar issues.

4.e5 d4 5.Ne4 Nh6

I was already in an unfamiliar position in the opening. Having decided to allow d5 early on and needing to prevent Nd6, this was the only square left for my knight. On the plus side, though the knight’s developed on the rim, it prevents g4 and f5, two key pawn pushes for white in the closed Sicilian. And with most of the play happening on the kingside, it turns out to be a useful square for the knight.

6.Nf3 Be7 7.Bc4

I don’t play the white side of the closed Sicilian, so I am not an authority on it. But the plan with g3 and Bg2 seems more potent to me.

7…O-O 8.O-O b6 9.d3 Bb7 10.Neg5!?

I don’t know if he saw my little trap here or intended to make this move all along. The trap being, if he made some other move here, say Qe2, I had the subtle 10…b5!, winning a piece.

10…Nc6 11.Bxe6??

I think this is a big mistake. Strictly on material count, he might be better, winning a rook and two pawns for a knight and bishop. But he no longer has an attack against my king, while I can start building pressure on his kingside. Additionally, his light squared weaknesses are even more brutally exposed with the loss of his bishop as its counterpart sits pretty on the long diagonal.

11…fxe6 12.Nxe6 Qd7 13.Nxf8 Rxf8 14.Qe2 Nd8 15.Bd2 Ne6 16.Ng5 Qc6 17.Nxe6 Qxe6 18.h3?!

Weakening even more squares around his kingside, though its hard to suggest more useful moves. Maybe his plan was to follow up with g4, preparing f5, but this also opens up his king to enemy attacks. A better plan might have been to play on the queenside, attacking black’s pawn structure.

18…Nf5 19.Kh2 Bh4 20.Qg4 Bg3+ 21.Kg1 Qd5 22.Rf3 Bh4 23.b3?!-+

Once again, a strange move. On his queenside, white needs to challenge the pawns on the dark squares, not give up total control. Anyway, this doesn’t hurt him because of black’s next few moves, where he throws away his big advantage and ends up losing.

23…Ne3??

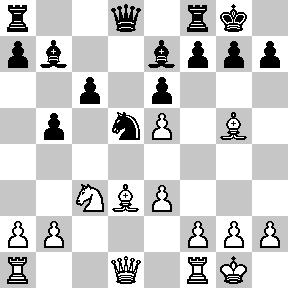

I had been eyeing the e3 square for my knight for quite a while. With my direct attack not coming to fruition, I felt I should return two minor pieces for his rook and then use the passed e-pawn (after Bxe3 dxe3) to press for victory. But it was much better to keep the pieces on the board and build a much stronger attack with 23…Qf7 24.Rff1 h5 25.Qe2 [25.Qd1 Qg6 Qe2] Ng3 26.Qf2 Nxf1 27.Qxf1 Bg3, at the end of which I am totally winning, up a full piece and about to win the f4 pawn to boot.

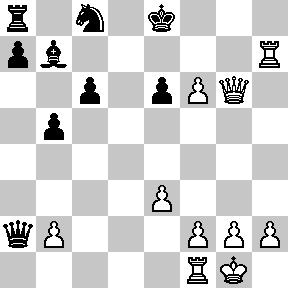

[Analysis diagram after 27…Bg3]

24.Bxe3 dxe3 25.Qxh4 Qd4 26.Qe1 Bxf3 27.gxf3 e2+?

Qxf4 was much stronger.

28.Kg2 Rxf4?

Once again Qxf4 was much stronger, putting pressure on the f3 pawn. After this mistake, I have a thankless task while white can play on without fear.

29.e6 Qe3 30.Qf2 Qxe6 31.Re1 Rf6 32.Qxe2 Rg6+ 33.Kf2 Qxe2+ 34.Rxe2 Kf7 35.f4 Rh6 36.Kg3 Rg6+ 37.Kf3 Rh6 38.Rh2 Ke6 39.Ke4 Kd6 40.h4 Re6+ 41.Kf3 Kd5 42.c3 Re1 43.Re2 Rf1+ 44.Kg4 Rg1+ 45.Kf5 Rh1 46.Re7 Rxh4 47.Rd7+ Kc6 48.Rxg7 Rh3 49.Rxa7 Rxd3 50.Rxh7 Rxc3 51.Kf6 c4 52.Rh8 Kc7?

Kb7 was needed, preventing white’s rook from arriving at a8 and protecting the a2 pawn. b5 was also possible.

53.bxc4 Rxc4 54.f5 Rc2 55.Ra8 Kb7 56.Ra3 b5 57.Kf7 Rc7+?

A wasted rook maneuver. Better would have been 57…b4, forcing the trade of the queenside pawns. Of course, I was lost long before this, so this only hastens the end.

58.Kg6 Kc8?

The last big mistake. For the second time in the game, I allow the white rook to come to the a8 square!

59.f6 Rc2 60.f7 Rg2+ 61.Kh5 Rf2 62.Ra8+ 1-0

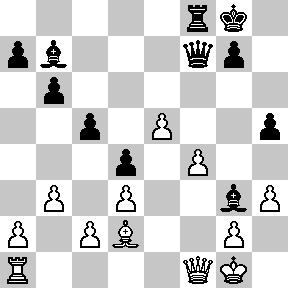

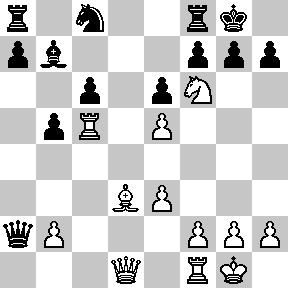

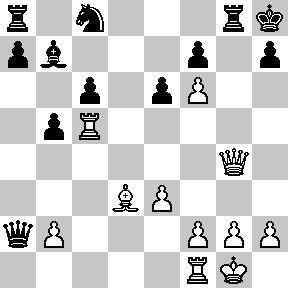

Material imbalance on one side of the board

February 1, 20081.d4 d5 2.c4 e6 3.Nc3 Nf6 4.Bg5 c6 5.Nf3 Be7

Black decides not to go for the super-sharp Moscow variation starting with 5…h6 6.Bh4

6.e3 O-O 7.Bd3 dxc4 8.Bxc4 b5 9.Bd3 Nbd7 10.O-O Bb7 11.Ne5 Nxe5 12.dxe5 Nd5

Not in itself a bad move. I thought Nd7 was probably better, putting pressure on e5, though I was planning to follow up with f4. In fact this does have the benefit of placing the knight on an active square, and if I decide to trade knights, capturing with the c-pawn solidifies the black pawn structure.

13.Bxe7 Nxe7

I would have preferred to take with the queen instead, leaving the knight on a good square.

14.Ne4 Nc8

My knight is extremely well placed on e4, threatening to jump into d6. It also prevents c5 just yet (activating black’s bishop). Black’s move is designed to prevent Nd6, but this is too early. Black is already behind in development and is effectively “undeveloping” a piece here. 14…Qb6 was probably a much better move here, again threatening c5, and connecting black’s rooks. Nc8 might have become an option, if and when I plonked my knight onto d6.

15.Rc1 Qd5?

16.Rc5!

Bringing my rook to a very active square and forcing the queen to go back to d7 or d8.

16…Qxa2?

Choosing to grab a pawn instead. Black’s kingside is dangerously weak while all of my pieces (except for the rook on f1) are perfectly positioned for an all-out attack. I wouldn’t say black is lost already but he is already in lots of trouble.

17.Nf6+!

17…gxf6?

This leads to forced mate. 17…Kh8 is not pleasant by any means, but was the only chance.

18.Qg4+ Kh8 19.exf6 Rg8

20.Qh4 Rg6

21.Rh5

Bringing us to the title of this post. White has 3 pieces (plus the pawn on f6, which controls g7, a very important square) attacking the black kingside, while black has just the one rook defending. Even though black is up a knight, the only thing that matters in this attack is the number of pieces on the black kingside.

21…Kg8 22.Rxh7 Kf8 23.Bxg6

Eliminating the last black defender.

23…fxg6 24.Qh6+ Ke8 25.Qxg6+

With mate to follow next move, black resigned.

On another note, for the first time in two and a half years, I played a serious over-the-board game in league play. I lost, but I think I had some winning positions which I couldn’t find a way to convert. I plan to sit down and analyze the game over the weekend and hopefully have it up here as well. My next game is on Monday night.